By Neil Nachbar

Doug Sawyer was diagnosed with amyotrophic lateral sclerosis (ALS), also known as Lou Gehrig’s disease, 11 years ago. His only muscles that still function are those that control eye movement.

Despite his disability, Sawyer still works as an engineer from his home, designing electronics for Hayward Industries. Using only his eyes, the 57-year-old writes reports and other papers, draws pictures and schematics, talks on the phone, sends text messages and emails, and attends meetings online multiple times a week.

However, Sawyer’s gaze weakens as he gets tired, causing the technology he currently uses to become ineffective. That’s why the Seekonk, Massachusetts resident was eager to work with University of Rhode Island Assistant Professor Yalda Shahriari to develop a new way for ALS patients to communicate.

Translating Brain Signals

Shahriari and her team of student researchers in URI’s College of Engineering are developing a way for those with severe motor deficits such as ALS to communicate using brain signals, eliminating the need for patients to maintain fine eye-gaze control.

Her project, funded by a National Science Foundation (NSF) grant, has two main goals. The first is to develop multimodal personalized algorithms to improve the robustness of the brain-computer interface (BCI) systems for patients with severe motor deficits. The second is to develop an autonomous hybrid system for non-communicative patients who are without residual motor control, such as those who lose their fine eye-gaze control in the late stages of ALS.

Through longitudinal recordings taken of several patients with ALS during this and previous projects, Shahriari and her group have noticed significant day-to-day variations in brain-computer interface performances.

“These variations are speculated to be associated with several factors, including cognitive fluctuations and environmental factors,” said Shahriari. “Developing personalized algorithms will enable us to predict these fluctuations and optimize performance based on each patient’s specifications and needs.”

Using Dual Techniques

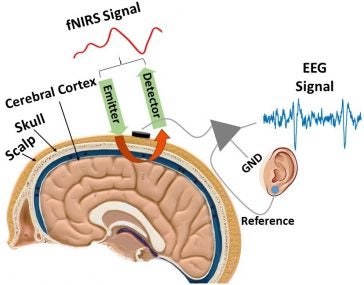

To ensure more accurate readings of brain activity, two non-invasive techniques are implemented simultaneously: electroencephalogram (EEG) and functional Near Infrared Spectroscopy (fNIRS) signals.

EEG detects electrical activity in the brain using small, metal discs called electrodes. Functional Near Infrared Spectroscopy is an optical imaging technique in which an emitter transmits near infrared light and a detector detects the light reflected from the surface of the brain. This technique measures oxygen changes in the concentration of hemoglobin in the brain. The higher the concentration, the more activity is taking place.

“We will use a hybrid of EEG and fNIRS signals to compensate for each neuroimaging modality shortage and use the complementary features obtained from each modality to improve our system,” said Shahriari.

For patients in the later stages of ALS who experience cognitive dysfunction, such as memory loss and the inability to maintain eye gaze on objects, Functional Near Infrared Spectroscopy has shown to be a more accurate method of measurement.

The Visuo-Mental Dual Task

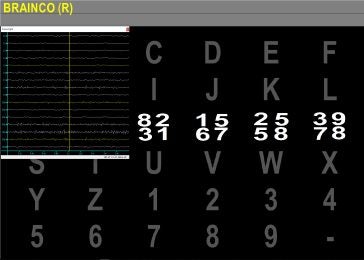

Shahriari and her students have developed a visuo-mental dual task paradigm which relies on conventional oddball-based protocols, but require the subjects to do some mental arithmetic tasks. This BCI approach is accomplished by displaying a grid of letters and numbers and intermittently flashing an image (matrix of digits) over each row and column.

“By giving the patient higher demanding tasks to focus on, we can trigger several cognitive functions and extract the associated signatures or neural biomarkers,” said doctoral student Bahram Borgheai. “The computer can then decode the pattern of neural activities that appear after the patient performs the tasks. The patterns can be used for diagnostic and communication purposes.”

More Patients Means More Data

Shahriari has collaborated with the National Center for Adaptive Neurotechnologies on projects since 2012. With the support of the national center, the Rhode Island Chapter of the ALS Association and Rhode Island Hospital, the professor would like to add more patients to the study.

“Our analysis of the data becomes much more powerful if we can significantly increase the number of patients in the study,” said Shahriari.

Patients will be asked to wear a cap with sensors attached that can record brain activity in the comfort of their homes or at a care center. Recordings of those with healthy brains will take place in Shahriari’s Neural Processing and Control Laboratory in URI’s Fascitelli Center for Advanced Engineering. All data processing and analysis will be conducted in the lab.

Once enough patients have volunteered to participate in the research project, Shahriari plans to partner with more local hospitals and medical schools to take advantage of their clinical expertise.

Paying It Forward

Sawyer has relished the opportunity to participate in the study.

“Taking part in the brain activity study has been very rewarding,” said Sawyer. “I enjoy learning new things and staying abreast of the latest technology. Dr. Shahriari and her team have been willing to share their progress. They make me feel as if I’m part of their team and not just a test number.”

Sawyer hopes that his participation will help Shahriari develop a way for ALS patients to work and communicate after their motor functions have ceased.

“I don’t consider myself a victim of ALS and I don’t consider myself handicapped,” Sawyer said. “I just need help sometimes. There are people out there far worse off than me. Hopefully the time I give to Dr. Shahriari will someday improve their lives.”