What happens to science when researchers begin saying “the model found” instead of “we found”? For computational biologist Nic Fisk, the answer is not simply about language but about how responsibility is assigned in scientific work.

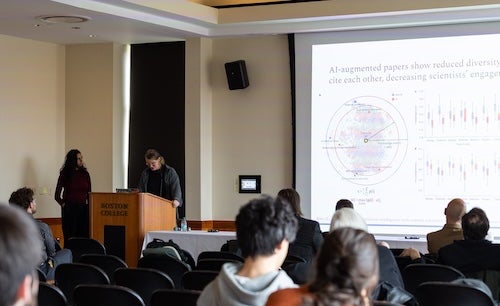

That question has been emerging across Fisk’s research. At a recent philosophy conference hosted by Boston College, Fisk, an assistant professor of cell and molecular biology at the University of Rhode Island, found themselves in a unique position: one of only two scientists in a room largely composed of philosophers and theologians.

The conference focused on “logos” – language, speech, and rationality – and what it means for artificial intelligence systems to appear to reason or communicate. While many participants focused on whether AI can truly “understand” language, Fisk explored a related but distinct question: how AI is changing the way scientists describe their work.

Fisk has noticed a recurring shift in scientific writing: researchers are increasingly attributing findings to computational systems. Where papers once said “we found,” researchers now more often write “the model shows” or “the model finds.” That linguistic shift, Fisk says, raises deeper questions about responsibility in science. Traditionally, scientific practice has emphasized falsifiability and human responsibility for interpretation and testing.

To move beyond anecdotal observation, Fisk conducted a large-scale analysis of more than 20 million scientific abstracts from PubMed. The study examined how often scientific writing attributes agency to researchers versus computational tools, as well as the use of passive voice over time.

The results showed a noticeable shift beginning around 2016, accelerating after 2018 and again after 2021. “We’re attributing agency to methods much more,” Fisk says. Importantly, the change was not explained by passive voice alone but appeared closely linked to the rise of machine learning and AI tools in research.

Fisk emphasizes that the concern is not about scientific rigor. “It’s not that what they’ve done is unscientific,” they say. “But there is a shift in how people talk about science.” That shift, they argue, raises questions about whether scientists are unintentionally relocating responsibility from researchers to computational systems. “If you are not an agent, if you do not have control, you cannot have responsibility,” Fisk says.

Similar questions have appeared across Fisk’s work in other fields. At the University of Rhode Island’s recent Innovative Education conference, Fisk and collaborator Annemarie Vaccaro used computational methods to study how education research constructs categories such as disability, race, and ethnicity.

Their analysis found that peer-reviewed education papers tend to describe disability in more negative terms and more often frame it as a deficit or problem, while policy documents and teaching resources are more likely to treat disability as an identity.

Fisk argues that this difference illustrates how academic norms shape what is treated as an object of study – that is, how researchers assign meaning and agency to the subjects they analyze. Taken together with their earlier work in computational science, these findings led Fisk to a broader question: how do disciplinary norms shape where agency is located in research, whether in people, systems, or computational methods?

That question resurfaced at a Women in Bioinformatics meeting, where Fisk spoke to computational biologists about scientific practice in the age of artificial intelligence. There, the focus shifted from how research describes its subjects to how scientists understand their role in increasingly AI-assisted research processes. Fisk argued that interdisciplinary collaboration can help clarify assumptions that often remain implicit within a single field. When researchers from different disciplines work together, they must explicitly define terms and methods because collaborators do not always share the same assumptions or shorthand.

That theme also appeared at a recent Rhode Island-based biomedical conference focused on artificial intelligence in health care, where Fisk participated in a panel on the state of AI in medicine. There, Fisk connected questions of scientific agency to clinical decision-making in oncology.

Drawing on their research, they described an association between how physicians conceptualize cancer – either as something internal to the patient or as an external invader – and the treatment strategies they tend to favor. While the analysis does not establish causality, Fisk says the findings suggest that metaphors used in clinical language may shape or reflect treatment decisions.

The emerging, consistent themes across Fisk’s work is not unrelated to how they mentor students at URI, they note. One of their students, Gift Asefon, recently received a best poster award for breast cancer imaging research developed in the Fisk Lab. Asefon began the project without prior programming experience, an example Fisk uses to reinforce a broader point: that scientific identity is shaped less by technical background than by the questions researchers choose to pursue and their ability to develop ways of answering them.

“It isn’t the skill set that defines a scientist,” Fisk says. “What makes a scientist is asking the questions you want the answer to.”

That emphasis on science as a communal, human practice becomes especially important as artificial intelligence becomes more deeply embedded in research. Across fields, Fisk returns to a central point: language matters. When humans say “we found” instead of “the model found,” they are not simply describing results. They are deciding who is responsible for them.